Thu 16 April 2026

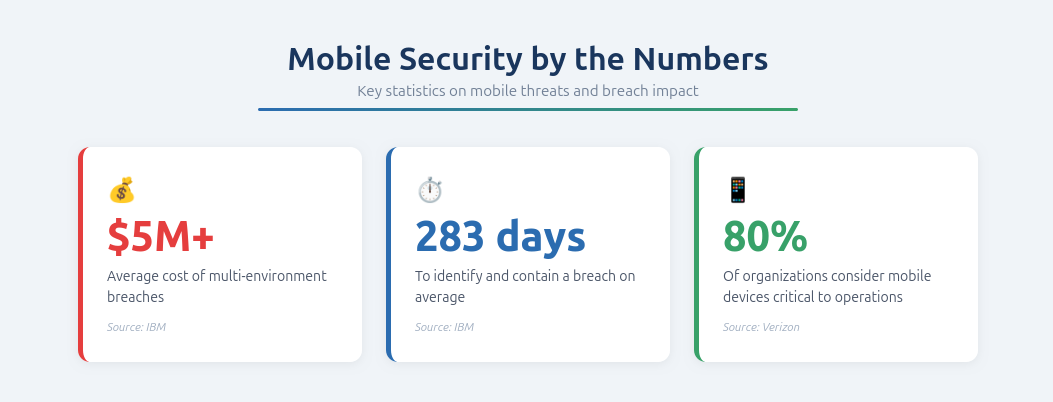

High-tech teams shipping mobile apps quickly need more than occasional scans or annual pentests. They need a mobile application security testing program that keeps pace with changing releases, evolving APIs, third-party SDK updates, and the realities of iOS and Android delivery. That is what modern mobile AppSec testing best practices are really about: continuous validation, low-noise findings, and clear release decisions. IBM reports that multi-environment breaches cost more than USD 5 million on average and took 283 days to identify and contain. Verizon reports that 80% of responding organizations consider mobile devices critical to their operations.

The challenge is that mobile apps fail in ways generic application security programs often miss. Real risk often appears in authentication and session handling, on-device storage, deep links, WebViews, third-party SDK exposure, and the mobile-to-API contract. Verizon’s DBIR notes that about 88% of breaches within a reported attack pattern involved the use of stolen credentials. NowSecure reports that more than 15% of assessed apps included components with known vulnerabilities. In fast-shipping environments, those issues are harder to catch when testing is disconnected from CI/CD, produces vague findings, or lacks enough evidence for engineering to reproduce and fix problems quickly.

This guide explains mobile app security testing best practices for high-tech teams that ship at scale. It covers what good mobile AppSec looks like, how to use MAST, SAST, and DAST together, what to test in real mobile attack surfaces, how to integrate testing into CI/CD, how to apply severity-based release gating, and how to evaluate a mobile AppSec solution.

What good mobile application security testing looks like in high-velocity environments

Good mobile application security testing does not mean “we ran a scan before release.” It means the organization has a repeatable way to validate mobile risk, produce findings engineers can act on, and make release decisions consistently across teams. In high-velocity environments, that definition has to include both security outcomes and operational outcomes.

Security outcomes

Strong mobile AppSec programs provide known coverage of the attack surfaces that matter most in production. That includes identity and session handling, insecure local storage, deep link security, WebView security, third-party SDK risk, and app-to-API interactions. The goal is not to claim abstract coverage. It is to know what is being tested, how often it is being tested, and what gaps still remain.

Good security outcomes also depend on reproducible findings. A finding that says “possible insecure behavior” is not enough for a mobile engineering team. A useful result shows what was tested, which flow was involved, what the app or backend did, and why that behavior creates risk. Without that detail, triage gets slower and findings become easier to ignore.

The third security outcome is a clear release policy. Teams should know which findings block a release, which ones become tracked remediation work, and what evidence is required before a fix is considered complete. Without that policy, testing creates activity but not predictability.

Operational outcomes

Operationally, good mobile AppSec reduces friction. Triage is faster because the findings are clearer. Release reviews are less chaotic because the severity policy is already defined. Engineering managers can plan more confidently because they are not negotiating from scratch every time a finding appears.

Good programs also reduce the noise tax. False positives, low-context alerts, and non-actionable output are especially costly for teams shipping quickly. When the signal becomes noisy, teams stop trusting the testing process itself. That makes even valid findings harder to prioritize.

For organizations managing multiple mobile apps, good mobile AppSec also means consistency across the portfolio. Shared severity thresholds, shared evidence standards, and shared workflow expectations are what make security scalable across teams.

MAST vs SAST vs DAST for mobile apps: what each covers and what each misses

No single testing method gives complete confidence in a modern mobile environment. Mobile risk often spans code, runtime behavior, device state, third-party components, and backend authorization. That is why effective mobile application security testing best practices rely on a layered model rather than a single approach.

SAST for mobile apps

SAST helps teams catch risky code patterns and insecure implementation choices early. It is useful for identifying unsafe API usage, weak cryptographic implementation, hardcoded secrets, and other issues that can be detected before runtime. In mobile pipelines, it is valuable because it can surface issues early in development.

But SAST has limits in mobile environments. It often lacks the runtime context needed to show whether a flaw is actually reachable, exploitable, or relevant in production. Many important mobile issues depend on app state, authenticated flows, device behavior, or backend responses that static analysis alone cannot validate.

DAST for mobile apps

DAST helps by testing the application while it is running. It is useful for surfacing runtime behaviors, session handling issues, authorization problems, and interactions between the app and real endpoints. In mobile environments, this is essential because many meaningful issues only appear once the app is installed, authenticated, and moving through real workflows.

The weakness of generic DAST is that it may not understand mobile-specific behaviors deeply enough. If testing does not reach realistic app states, real identities, and representative backend conditions, its signal will be limited.

MAST for mobile apps

MAST is distinct because it is designed for mobile-specific entry points and attack surfaces. It focuses on areas such as deep links, WebViews, local storage, SDK exposure, transport and session behavior, and the mobile-to-API contract. That makes it particularly relevant for teams building and releasing native mobile apps at speed.

MAST matters because many critical mobile issues do not exist purely in source code or purely in network traffic. They appear in the relationship between the installed app, device behavior, and backend trust assumptions.

Recommended testing combinations

For most teams, the strongest approach is not choosing one method over another. It is using them together in a way that matches the release model.

| Operating model | Recommended mobile security testing approach |

|---|---|

| Fast-shipping consumer app | CI-triggered checks, scheduled deeper scans, severity-based release gating |

| Multi-app portfolio | Standardized testing pipeline, shared thresholds, centralized governance |

| Regulated environment | Continuous mobile testing with evidence-rich output and documented control execution |

| Mature mobile AppSec program | Layered SAST, DAST, and MAST aligned to development, release, and verification |

The practical takeaway is simple: SAST finds patterns, DAST validates runtime behavior, and MAST adds mobile-specific context. High-performing teams usually need all three.

Mobile application security testing checklist: what to test and why it breaks in production

A strong mobile app security checklist should focus on the places where mobile apps actually fail in production. These problems often appear at the boundaries: between app state and API state, between normal routing and deep links, between secure storage assumptions and what is really written to disk, or between approved SDK usage and what third-party code is doing at runtime.

Identity and session handling

Identity is one of the most important areas in mobile application security testing because it spans the device, app, authentication provider, and backend services. Teams should test:

- Token storage patterns

- Tession invalidation after logout or password change

- Privilege boundaries across roles and tenants

- Auth-state desynchronization between app and API

A common mobile failure mode is when the UI appears logged out, but a previously issued token still works against backend services. Another is weak separation between users or tenants. These issues are difficult to assess without real app flows and real backend responses.

Insecure local storage and secrets

Testing should validate whether the app stores tokens, credentials, sensitive user data, or internal secrets in insecure locations on iOS or Android. That includes:

- Local preferences and files

- Caches and temporary storage

- Logs and debug traces

- Misuse of key management features

A practical best practice is to define a default policy of no secrets on device unless explicitly justified. This area often breaks because convenience decisions around caching and persistence accumulate over time.

Deep link security

Deep links are a major mobile attack surface because they create alternate entry points into the app. Teams should test whether deep links:

- Allow unauthorized access to internal routes

- Pass untrusted input into sensitive flows

- Behave inconsistently across public, authenticated, and privileged screens

- Create conflicts when multiple apps or handlers claim the same scheme

Deep links are especially vulnerable to drift because they are often added for onboarding, support, growth campaigns, and notifications.

WebView security

WebView security deserves dedicated testing because WebViews combine native app behavior with embedded web content. Teams should test:

- JavaScript bridges

- Content loading rules

- Mixed content handling

- Message handlers

- URL parameter injection paths

The risk is usually not just the existence of a WebView, but the trust assumptions around what content it loads and what native capabilities it can reach.

Third-party SDK risk

Third-party SDKs introduce additional code, endpoints, permissions, and data flows into the app. Mobile security testing should verify:

- What SDKs collect and transmit?

- What permissions they use?

- Whether versions are outdated?

- Whether they introduce unexpected endpoints or new behaviors?

Good governance starts with an approved SDK list and version policy, but testing is still necessary because SDK behavior often changes over time.

Mobile-to-API contract testing

Some of the most important mobile vulnerabilities appear in the relationship between the app and backend APIs. Teams should test:

- Real endpoint coverage

- Authorization boundaries

- Object access controls

- Token and session behavior

- Input handling under realistic conditions

This area matters because the app and backend are often built or changed at different speeds. The resulting mismatches are a frequent source of exploitable flaws.

Evidence-rich mobile security findings: how to speed up remediation

Finding an issue is only half the job. Effective mobile security testing best practices require findings that engineering can reproduce and fix quickly. If the output is vague or lacks context, triage slows down and confidence drops.

A strong mobile finding should include:

- What ran and where

- Build or version tested

- App and identity state

- Exact reproduction steps

- Requests, payloads, and responses where relevant

- Supporting logs or screenshots when useful

- Clear remediation direction or issue classification

A useful standard here is the no-guesswork rule: if the receiving team has to guess what happened, why it matters, or how to reproduce it, the finding is not ready. This matters even more in mobile because issues often depend on runtime state, navigation order, device conditions, and backend behavior.

Evidence quality also supports governance. If findings are evidence-rich and reproducible, release owners can make better severity decisions and verify fixes more confidently.

Mobile security testing in CI/CD: how to operationalize without blocking delivery by default

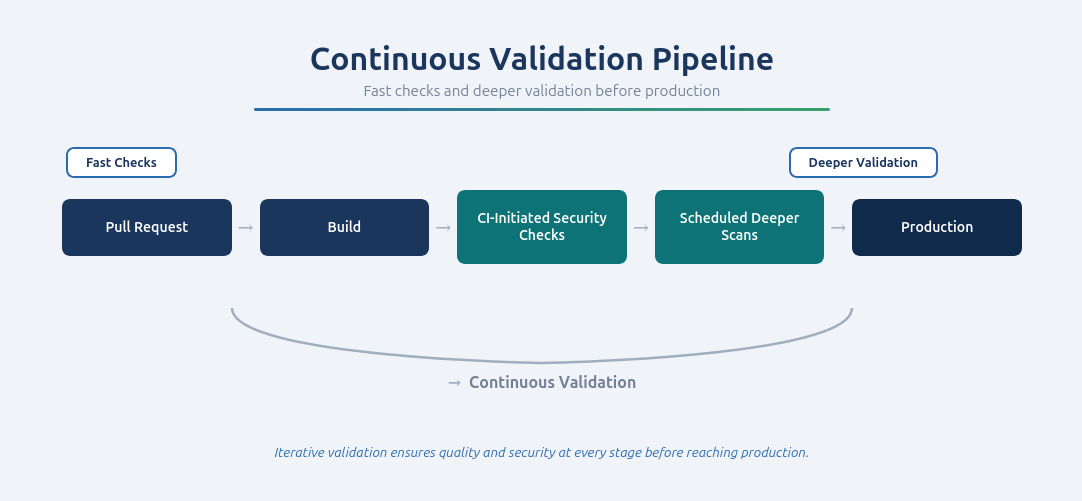

The best mobile security testing CI/CD model combines speed, coverage, and governance. Security checks need to run often enough to keep pace with releases, but not in a way that turns every build into a bottleneck.

Pattern 1: CI-triggered testing

CI-triggered testing gives teams fast feedback when code or builds change. These checks may run on pull requests, merges, or release branches depending on the release workflow. The key is to keep early checks fast and relevant, while saving deeper testing for later stages.

Pattern 2: Scheduled scans

Scheduled scans are essential because they catch drift. Mobile apps change through SDK updates, backend changes, new endpoints, and accumulated release changes over time. Scheduled testing provides a recurring baseline that complements per-change workflows.

Pattern 3: Severity-based release gating

Release gating makes testing actionable. A common policy is that Critical and High findings block release until fixed and verified, while Medium and lower findings flow into remediation pipelines. This gives teams a way to preserve velocity without treating security as optional.

Workflow integration checklist

A scalable mobile AppSec workflow usually integrates with:

- CI/CD platforms

- Issue tracking systems

- Collaboration and notification tools

- SSO and access management

- Source control and release workflows

Ostorlab’s High-Tech solution is positioned around continuous testing in the development pipeline, reproducible proof-backed findings, continuous validation across every release, and integrations with platforms like Jira, GitHub, GitLab, Jenkins, Bitrise, Slack, ServiceNow, Okta, and Azure DevOps.

See how Ostorlab supports continuous mobile security testing in CI/CD

Severity-based release gating for mobile apps: a practical policy

A good release policy turns testing into action. Without one, teams end up debating findings under deadline pressure instead of following a known process.

A practical model looks like this:

This model avoids two common failure modes. The first is blocking releases for everything, which creates fatigue and makes security feel like a constant obstacle. The second is never blocking anything, which turns governance into a symbolic process instead of a real control.

For high-velocity teams, the right balance is clear severity thresholds, trusted evidence, and predictable handling across apps and teams.

Bumble case study: continuous mobile security testing at release velocity

Bumble’s public case study shows how continuous mobile security testing can be embedded directly into the iOS and Android release process. According to the case study, Bumble runs CI-initiated scans along with scheduled scans so it can validate security continuously as the apps change over time, not just at major release points.

The case also shows a practical release policy. High and Critical findings block release until remediation is completed and the issue is confirmed resolved, while Medium, Low, and Informational findings flow into the remediation pipeline instead of automatically blocking delivery. Bumble also emphasizes traceability from scan summary to raw evidence so engineers can reproduce issues quickly and reduce triage ambiguity.

Read the full Bumble case study.

Compliance and regulatory considerations for mobile AppSec

Compliance does not replace mobile application security testing. It changes what organizations must evidence and how they govern releases. Mobile apps process personal data, rely on identifiers, embed third-party SDKs, and often operate across multiple regions, which means compliance requirements frequently influence mobile AppSec operating models.

Privacy and data protection

GDPR affects how teams think about telemetry, personal data, identifiers, and third-party data sharing in Europe. CCPA/CPRA has similar implications in California-focused contexts. In both cases, teams need better visibility into data handling, SDK behavior, storage, and unintended exposure.

Security and resilience

Frameworks such as NIS2 and DORA raise expectations around cyber risk management, resilience, third-party oversight, and governed release processes. For mobile teams, that makes ad hoc testing harder to justify.

Sector-driven requirements

HIPAA matters when mobile apps handle protected health information. PCI DSS matters when apps participate directly in payment card flows. In both cases, testing around storage, sessions, third-party components, and sensitive workflows becomes even more important.

Assurance frameworks

SOC 2 and ISO 27001 do not define mobile-specific test cases, but they reinforce the need for repeatable security controls, clear workflows, and documented evidence that issues are being handled consistently.

The key point is simple: compliance increases the importance of continuous testing, evidence-rich findings, and severity-based release governance.

How to evaluate a mobile AppSec testing solution

Choosing a mobile AppSec testing solution is not just a tooling decision. It is also a workflow, coverage, and governance decision. The right platform should help teams validate real mobile risk continuously, produce findings engineers can reproduce quickly, and support predictable release decisions across multiple apps and teams.

1. Coverage: does it reflect real mobile risk?

A strong solution should cover deep links, WebViews, on-device storage, authenticated flows, third-party SDK exposure, and app-to-API interactions. Generic app security capabilities are not enough if they miss the attack surfaces that matter most in production.

2. Evidence quality: can teams reproduce and fix findings quickly?

Look for evidence-rich findings with reproduction steps, request/response context, and enough runtime detail to remove guesswork. Good evidence is what turns testing into faster remediation instead of more triage burden.

3. Delivery fit: does it work in the way teams actually ship?

The solution should support CI/CD workflows, scheduled validation, and severity-based release gating. It should also integrate with issue tracking, collaboration tools, and portfolio-level governance models.

4. Noise handling: can teams trust the signal?

High-velocity teams cannot absorb large volumes of low-confidence output. A strong solution should help reduce false positives, de-duplicate weak findings, and prioritize issues that matter under real app conditions.

Questions buyers should ask

- Does the solution cover deep links, WebViews, local storage, SDK exposure, authenticated flows, and app-to-API interactions?

- Can it produce evidence-rich findings engineers can reproduce quickly?

- Does it support CI/CD, scheduled scans, and release gating?

- Can it scale across multiple apps and teams?

- How does it reduce false positives and triage ambiguity?

- Does it stay relevant to real endpoints and real application behavior?

Ostorlab’s High-Tech solution is positioned around deep coverage of modern mobile attack surfaces, end-to-end visibility from app to backend services, reproducible proof-backed findings, and continuous validation across every release.

The future of mobile app security testing

The future of mobile app security testing is moving beyond periodic scans and isolated point-in-time reviews. As mobile apps become more reliant on APIs, third-party SDKs, complex user workflows, and runtime logic, security teams need testing that reflects how applications actually behave in production. The shift is toward continuous, exploitability-focused testing that can validate real attack paths instead of only flagging theoretical issues.

That shift matters because many of the vulnerabilities that create real business risk are no longer simple coding mistakes. Modern mobile flaws often depend on authentication state, session transitions, onboarding and payment flows, API authorization logic, and trust boundaries across the app, backend services, and embedded SDKs. Traditional static checks and generic runtime scanning still play an important role, but they do not always reveal whether an issue is truly exploitable under realistic conditions.

This is where the next generation of AI-powered mobile security testing becomes more important. The market is moving toward deeper, workflow-aware testing that can explore application behavior, follow complex flows, and help teams distinguish high-impact vulnerabilities from noisy findings. For fast-shipping teams, that means a better signal: fewer abstract alerts and more evidence tied to real exploitability.

Ostorlab’s Agentic Deep Scan reflects that direction. Ostorlab positions it as a next-generation, AI-powered mobile app security testing scanner for iOS and Android that uses AI capabilities to simulate real-world attacks and detect truly exploitable vulnerabilities across mobile apps, supporting APIs, and embedded SDKs, with proof-grade evidence and verification retesting after remediation. Ostorlab also highlights support for testing authenticated flows, including 2FA and OTP, which is critical for identifying mobile vulnerabilities that only appear in real user states and protected workflows.

For high-tech teams, this changes the role of mobile AppSec. Instead of acting mainly as a reporting layer, security testing becomes a way to validate what is genuinely exploitable, why it matters, and whether a fix actually resolved the issue. That shortens the path from detection to remediation and makes security testing more useful inside fast release cycles.

The long-term direction is clear: mobile security testing will increasingly be defined by real-world attack simulation, exploitability-aware validation, stronger runtime context, and lower-noise findings that engineering teams can act on quickly. Teams that adopt that model will be better positioned to secure iOS and Android releases at scale without adding unnecessary friction to delivery.

Table of Contents

- What good mobile application security testing looks like in high-velocity environments

- MAST vs SAST vs DAST for mobile apps: what each covers and what each misses

- Mobile application security testing checklist: what to test and why it breaks in production

- Evidence-rich mobile security findings: how to speed up remediation

- Mobile security testing in CI/CD: how to operationalize without blocking delivery by default

- Severity-based release gating for mobile apps: a practical policy

- Bumble case study: continuous mobile security testing at release velocity

- Compliance and regulatory considerations for mobile AppSec

- How to evaluate a mobile AppSec testing solution

- The future of mobile app security testing